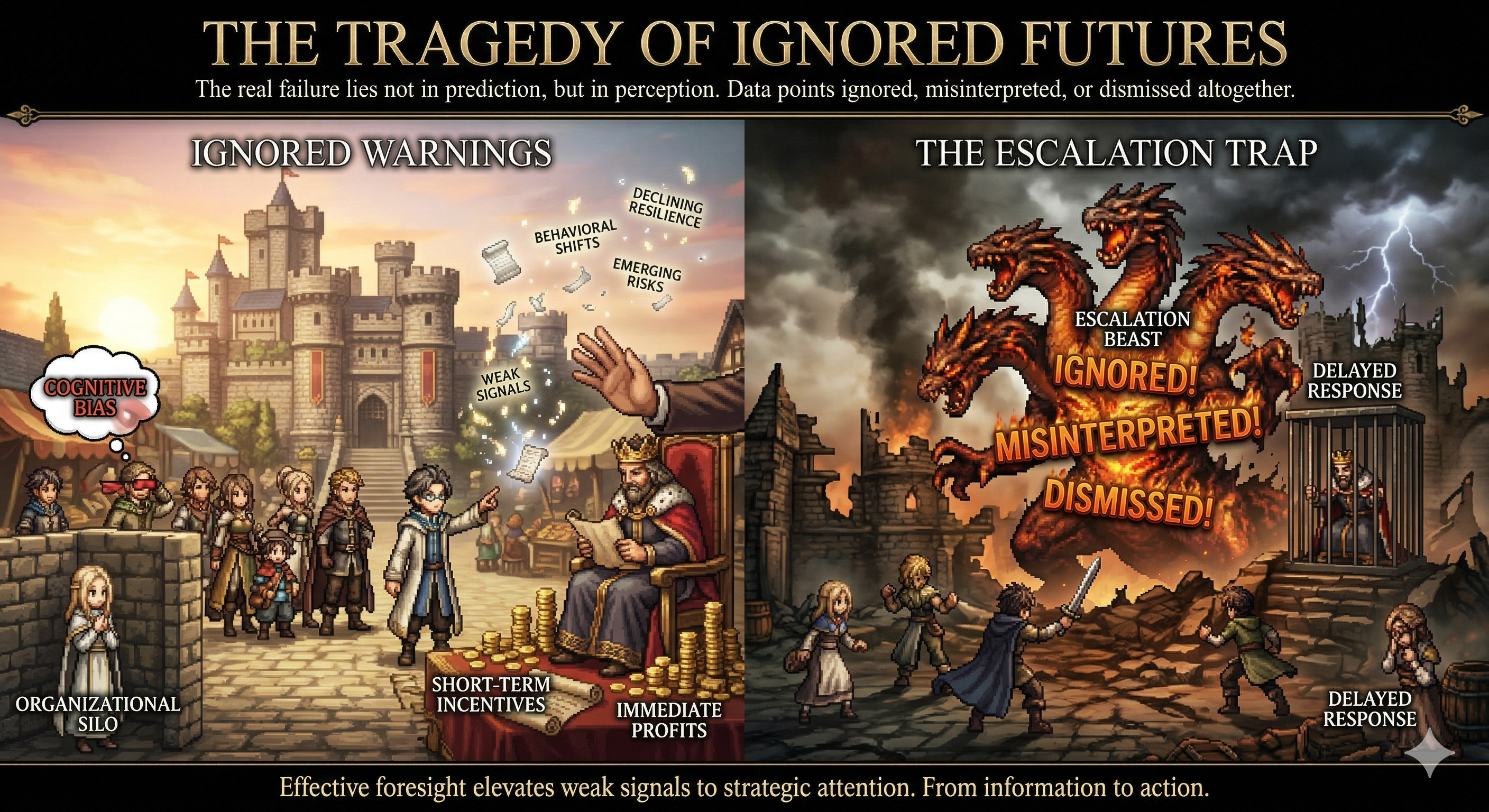

Contrary to popular belief, most crises are not truly unexpected. In many cases, the warning signs were already present—weak signals scattered across reports, data points, and expert observations. The real failure lies not in prediction, but in perception.

Foresight does not fail because the future is unknowable. It fails because signals are ignored, misinterpreted, or dismissed altogether. This creates a dangerous illusion: that crises arrive suddenly, when in reality, they have been quietly developing over time.

The Anatomy of Strategic Blindness

Strategic blindness occurs when organizations are unable—or unwilling—to see emerging risks despite available evidence. This is rarely due to a lack of data. Instead, it is often the result of deeper systemic issues.

First, cognitive biases filter out inconvenient signals, reinforcing existing beliefs. Second, organizational silos prevent information from flowing across departments, fragmenting the overall picture. Third, short-term incentives discourage long-term thinking, prioritizing immediate results over future resilience.

Together, these factors create an environment where early warnings are systematically overlooked.

Signals That Were There All Along

Many historical disruptions illustrate this pattern. Prior to major crises, indicators often exist in plain sight—declining system resilience, emerging behavioral shifts, or early technological breakthroughs. However, these signals are frequently dismissed because they appear insignificant in isolation.

The challenge of foresight is not just to detect signals, but to recognize their potential significance before they become obvious to everyone.

The Escalation Trap

One of the most dangerous dynamics in failed foresight is delayed response. When early signals are ignored, risks continue to evolve until they reach a tipping point. At this stage, response becomes reactive, costly, and often insufficient.

This creates what can be described as the escalation trap: the longer action is delayed, the more severe and complex the problem becomes. What could have been managed as a minor adjustment turns into a full-scale crisis.

From Information to Action

Having access to information is not enough. Organizations often possess relevant data but fail to translate it into actionable insight. This gap between knowledge and action is where foresight breaks down.

Effective foresight requires not only detecting signals, but also integrating them into decision-making processes. This means creating mechanisms that elevate weak signals to strategic attention, even when they challenge dominant assumptions.

Building Systems That Can See

To avoid strategic blindness, organizations must design systems that are capable of seeing—and responding to—emerging change. This involves embedding horizon scanning practices, encouraging dissenting perspectives, and aligning incentives with long-term outcomes.

It also requires leadership that is willing to act under uncertainty, making decisions based not only on what is certain, but on what is plausible and potentially impactful.

Foresight, in this sense, is not just an analytical capability—it is an organizational discipline.

Conclusion: The Tragedy of Ignored Futures

The greatest tragedy in failed foresight is not that the future was unknown, but that it was visible—and ignored.

In a complex world, the cost of inaction often exceeds the cost of being wrong. Acting early may carry uncertainty, but waiting for certainty guarantees delay.

Ultimately, foresight is not about predicting every outcome. It is about reducing the likelihood of being caught unprepared.

The question is no longer whether signals exist, but whether we are willing to see—and act on—them.